The traditional request-response cycle is undergoing a fundamental shift. As applications become more complex and users demand sub-second interaction times globally, centralizing compute resources in a single region is no longer viable. Welcome to the era of edge computing.

Introduction to Edge Compute

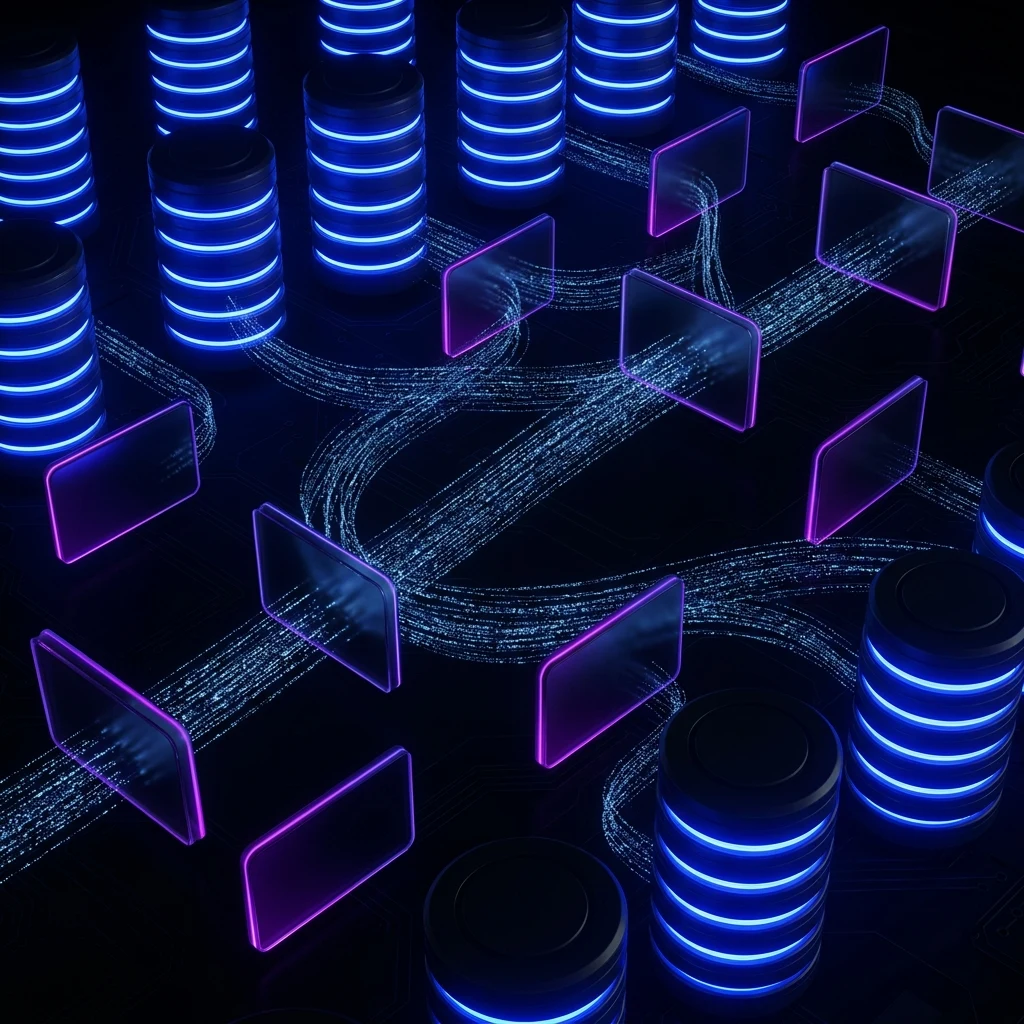

Edge computing moves computation away from centralized data centers and closer to the data source or end user. This architectural paradigm shift isn’t just about CDN caching anymore; it’s about executing complex application logic at the network’s periphery.

By leveraging distributed networks, we can intercept requests, run middleware, and even interact with globally distributed databases before the request ever reaches a “main” origin server.

“The edge isn’t a place; it’s a capability. It’s the ability to run compute wherever it makes the most sense for latency and performance.”

The Latency Problem

Physics remains our biggest constraint. The speed of light limits how fast data can travel. A request from Tokyo to a server in us-east-1 and back will invariably take hundreds of milliseconds, regardless of your application’s optimization.

import { next } from '@astrojs/edge';

export default function middleware(request, context) {

// Execute logic at the edge

const region = request.headers.get('x-vercel-ip-city');

if (region === 'Tokyo') {

return new Response('Konnichiwa!');

}

return next();

}Distributed Architecture

Designing for the edge requires rethinking state management. When compute runs everywhere, where does the data live? We see emerging patterns like globally replicated key-value stores and CRDTs (Conflict-free Replicated Data Types) solving these synchronization challenges.

Stateless Edge

Pure functions executing close to the user, ideal for routing, auth checks, and A/B testing.

Stateful Edge

Compute co-located with distributed databases, allowing complex queries without origin trips.